TL;DR:

- Technical SEO underpins a website’s ability to rank by ensuring search engines can crawl, render, and index content effectively, especially in AI-driven search landscapes. Many businesses overlook hidden issues like blocked pages, slow load times, or schema misconfigurations that prevent pages from appearing in search results or AI responses. Regular audits, proper structured data, mobile optimization, and careful site infrastructure are essential to maintain visibility and compete in the evolving digital landscape.

Your content can be brilliant, your brand story compelling, and your New Jersey business genuinely worth finding. But if your website’s technical foundation is shaky, Google and AI-powered search platforms may never surface you to the customers who need you most. Technical SEO is the process of optimizing a website’s infrastructure so search engines can efficiently crawl, render, index, and rank its content. In this guide, we break down what technical SEO really means, why it’s more critical than ever in 2026, and exactly what New Jersey businesses should do to build a search presence that holds up.

Key Takeaways

| Point | Details |

|---|---|

| Technical SEO defined | It means optimizing your website’s infrastructure so search engines can find, process, and rank your content. |

| Core ranking mechanics | Crawling, rendering, and indexing are essential for being visible in both traditional and AI-powered searches. |

| Practical technical fixes | Routine checks like sitemaps, mobile optimization, and structured data are key to ongoing search performance. |

| AI search demands more | Semantic HTML, up-to-date schema, and a clear site structure are critical for visibility in AI-driven results. |

| Expert help available | Partnering with technical SEO experts can reveal hidden issues and give your site a search-edge, especially as AI evolves. |

Understanding technical SEO: Why it matters now

Most business owners think SEO means writing blog posts or stuffing keywords into page titles. That’s understandable. Content is visible. You can see it, share it, point to it. Technical SEO, on the other hand, lives beneath the surface, like the hull of a ship. You don’t see it from the deck, but if it’s compromised, you’re not going anywhere.

“Technical SEO is the process of optimizing a website’s infrastructure to ensure search engines can efficiently crawl, render, index, and rank its content.” — Search Engine Land

This distinction matters enormously. A website can have hundreds of pages of excellent content and still rank nowhere if search engine bots can’t access those pages. Broken internal links, misconfigured robots.txt files, slow load times, or missing sitemaps can all prevent Google from seeing what you’ve built. It’s like writing an award-winning novel and locking it in a room with no door.

The rise of AI-driven search makes this even more urgent. Platforms like Google’s AI Overviews depend on structured, machine-readable data to generate responses. A site that isn’t technically sound won’t just struggle in traditional rankings. It won’t show up in AI-generated answers either. Understanding the differences between traditional SEO and AI search helps you appreciate just how foundational technical health really is.

Here are the core benefits of getting technical SEO right:

- Search engine bots can access and crawl every important page on your site

- Your content gets indexed correctly, so it’s eligible to rank

- Faster load times reduce bounce rates and improve user experience

- Mobile optimization supports local search, which is critical for New Jersey businesses

- Structured data makes your site readable by AI systems, not just humans

- A clean URL structure and strong internal linking distribute authority across your pages

Core mechanics: How search engines crawl, render, and index your site

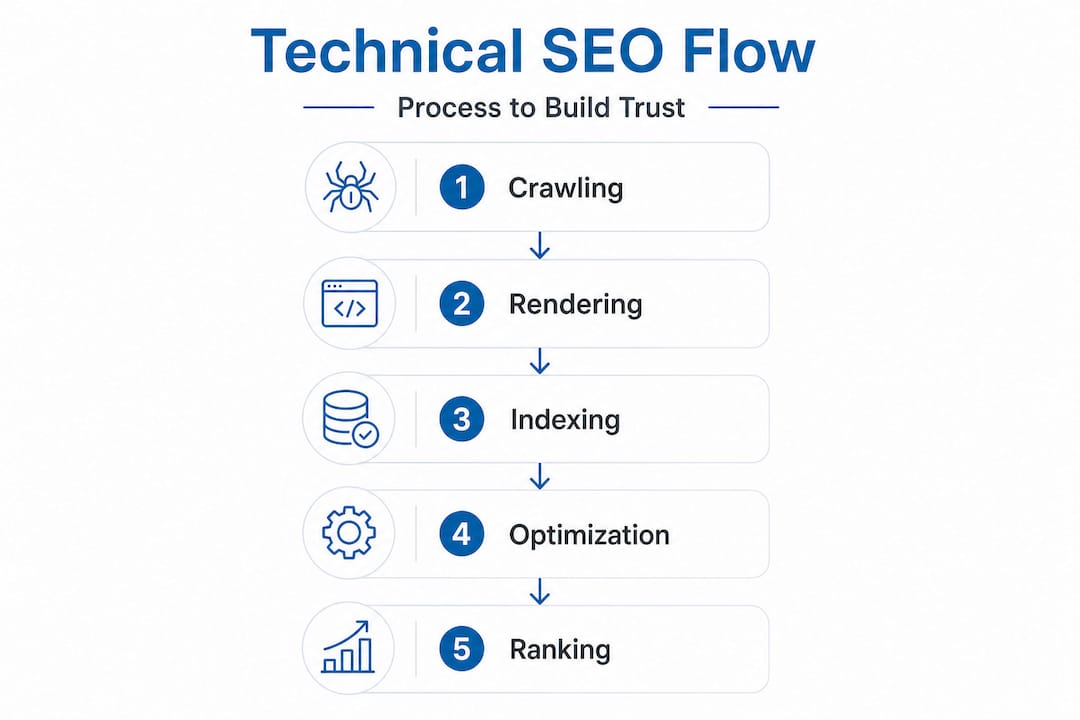

Before you can fix technical SEO problems, you need to understand the sequence search engines follow when processing your site. It’s a four-stage journey, and a breakdown at any point means your content may never reach the rankings.

The core mechanics include crawlability, rendering, indexing, and ranking signals. Crawling is how bots like Googlebot discover pages through links and sitemaps. Rendering is when Google loads your page’s JavaScript, images, and dynamic content to see what a real user would see. Indexing is when that rendered content gets stored in Google’s database. Ranking signals then determine where that content appears in search results.

| Stage | What happens | Common problems | SEO impact |

|---|---|---|---|

| Crawling | Bots follow links and sitemaps to find pages | Blocked URLs, broken links, crawl budget waste | Pages remain undiscovered |

| Rendering | Google loads JS and visual elements | JavaScript-heavy pages load too slowly | Content appears invisible or incomplete |

| Indexing | Content stored in Google’s database | Noindex tags, duplicate content, thin pages | Pages excluded from search results |

| Ranking | Signals determine position in results | Poor Core Web Vitals, missing schema | Lower visibility and fewer clicks |

The most common issues we see on New Jersey business sites are crawl blocks that owners never even knew existed. Someone on the team added a “noindex” tag during development and forgot to remove it. Or a redirect chain grew so long over several site updates that Googlebot simply stops following it.

Here are the most frequent crawl and indexing problems and how to address them:

- Blocked pages in robots.txt: Review your robots.txt file at yourdomain.com/robots.txt to make sure you’re not accidentally blocking important sections of your site.

- Broken internal links: Run a crawl using an audit tool to identify 404 errors and replace or redirect broken links to live pages.

- Redirect chains and loops: Each redirect adds latency. Keep redirect paths to a single hop wherever possible.

- Duplicate content: Use canonical tags to signal to Google which version of a page should be indexed and ranked.

- Missing or incorrect XML sitemap: Ensure your sitemap is submitted in Google Search Console and contains only indexable pages.

Pro Tip: Set up Google Search Console for every site you manage and check the Coverage Report monthly. It shows you exactly which pages are indexed, which are excluded, and why. For local businesses competing in New Jersey search results, catching these issues early can be the difference between page one and page five.

You can also compare AI vs. traditional SEO results to understand how these mechanics affect performance across both kinds of search environments.

Key technical SEO elements: Practical fixes and tools

Now that you understand how search engines process your site, let’s look at the specific technical elements you need to check, fix, and maintain. Think of this as your ship’s maintenance checklist. You don’t wait for the engine to fail before you inspect it.

Key technical methodologies include XML sitemaps, robots.txt configuration, site speed optimization, HTTPS/SSL, mobile responsiveness, structured data, clean URL structure, and fixing broken links, duplicate content, and redirect chains. Each of these directly affects whether your site performs well in both traditional and AI-powered search.

Here are your must-do technical checks for every New Jersey business website:

- XML sitemap: Submit an up-to-date sitemap through Google Search Console. Include only canonical, indexable pages.

- Robots.txt: Confirm you’re not blocking Googlebot or AI crawlers from key pages.

- HTTPS/SSL certificate: Google treats HTTPS as a ranking signal. If your site still loads over HTTP, fix this immediately.

- Mobile responsiveness: Google uses mobile-first indexing, meaning it primarily evaluates the mobile version of your site.

- Core Web Vitals: Measure Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS) using PageSpeed Insights.

- Schema markup: Add structured data for your business type, products, services, FAQs, and reviews.

- Clean URL structure: Use short, descriptive, keyword-relevant URLs. Avoid dynamic strings and unnecessary parameters.

When it comes to running audits, choosing the right tool matters. Semrush Site Audit detects 23% more issues than Ahrefs in areas like JavaScript rendering, internal links, and structured data, which is significant if your site relies heavily on dynamic content.

| Tool | Detection strength | Best for |

|---|---|---|

| Semrush Site Audit | JavaScript rendering, structured data, internal links | Full-scale site audits, ongoing monitoring |

| Ahrefs Site Audit | Backlink analysis, content gaps, crawl issues | Link-focused audits and competitive research |

| Screaming Frog | Deep crawl data, redirect mapping, on-page elements | Technical deep dives and in-house developer use |

Pro Tip: Don’t just chase Core Web Vitals scores. Prioritize schema markup for your specific business type, whether that’s a law firm, a restaurant, or a medical practice. Local business schema is one of the fastest ways to improve visibility in AI-powered search results and rich results on Google.

For more on turning these audits into rankings, explore powerful optimization techniques that go beyond basic fixes.

Technical SEO in the AI era: Adapt or get left behind

The search landscape has shifted. Google’s AI Overviews, generative answers, and machine learning-powered ranking signals have changed what it means for a website to be “technically sound.” A clean robots.txt file is no longer enough. Your site needs to be machine-readable in a much deeper sense.

For AI-driven search, prioritize structured data for entity recognition, semantic HTML, Core Web Vitals, and a clean information architecture to aid machine learning categorization. Schema is shifting from generating rich results to enabling machine understanding of entities.

What does that mean in practice for a New Jersey business? It means Google’s AI needs to understand not just that your page exists, but what your business is, who it serves, where it operates, and why it’s authoritative. Schema markup communicates all of this directly to AI systems.

Branded queries with AI Overviews see an 18% lift in click-through rate compared to standard results. That’s a significant opportunity, but only for sites that are technically configured to appear in those features.

Here are the steps New Jersey businesses should take to stay visible in AI-powered search:

- Add LocalBusiness schema with your full name, address, phone, hours, and service areas

- Use semantic HTML elements like "

,,, and - Build a clear information architecture with logical category pages and internal linking

- Improve Core Web Vitals so Google’s systems rank your site as a fast, stable experience

- Ensure your Google Business Profile is accurate and synced with the schema on your website

- Start with Google Search Console audits for indexing and crawl errors, then prioritize mobile speed and schema for local AI visibility

One topic that’s generating real debate in 2026: blocking AI bots. Some businesses are restricting access to AI training crawlers like GPTBot to protect their intellectual property. That’s a legitimate concern. But blocking all AI bots indiscriminately can reduce your visibility in AI-generated answers. A selective approach, allowing retrieval bots while restricting training bots, often makes more strategic sense. Explore the pros and cons of AI search optimization to make an informed decision for your specific business.

Crawling, indexing, and AI: Key distinctions and strategies

Crawling and indexing are often used interchangeably, but they mean different things and have very different implications for your SEO strategy.

Think of crawling as a scout. Google sends Googlebot out to explore your site and take notes. Indexing is when those notes get filed into Google’s library. A page can be crawled but not indexed, which happens more often than you’d think, especially with pages that have thin content, duplicate signals, or explicit noindex tags. It’s a crucial distinction.

“Crawlable but non-indexed pages are common in technical SEO. Traditional SEO focuses on Googlebot, but AI SEO involves multi-bot environments where different crawlers serve training versus retrieval purposes.” — Search Engine Land

For local New Jersey businesses, the stakes are real. A service page that Googlebot crawls but never indexes won’t drive leads. Neither will a page that AI systems can’t interpret. Here’s a numbered checklist to ensure both bots and AI systems see the right content on your site:

- Confirm all important pages are included in your XML sitemap and are set to “index, follow.”

- Check robots.txt to ensure you’re not blocking Googlebot, Bingbot, or retrieval-focused AI crawlers.

- Use canonical tags to consolidate duplicate content and direct ranking authority to the right page.

- Implement structured data on every page type: home, services, contact, blog, and location pages.

- Set up regular crawl monitoring in Google Search Console and act on coverage errors within 30 days.

- Review your site’s internal linking to ensure no important page is more than three clicks from your homepage.

For businesses targeting specific New Jersey markets, local SEO fundamentals layer on top of this technical foundation to drive geographically relevant traffic.

Our take: Why most businesses get technical SEO wrong (and how to do it right)

We’ve audited hundreds of New Jersey business websites, and the pattern is consistent. Business owners invest in design, pay for blog content, run ads, and then wonder why their organic traffic is flat. The culprit is almost always technical. Not always obvious, but almost always technical.

The most common mistake we see is relying on plugins or automated “fix-it” tools as a complete solution. A plugin can help you add schema markup or generate a sitemap, but it won’t identify redirect chains buried in your site architecture, fix crawl budget waste from thousands of low-value paginated URLs, or flag the fact that your JavaScript framework is making pages invisible to Googlebot during rendering. Plugins are crew members, not the captain.

Ignoring mobile speed and local schema in the AI era has a real cost. We’ve watched businesses lose local market share not because their competitors produced better content, but because those competitors had faster mobile load times and properly configured LocalBusiness schema. In a competitive New Jersey market, those technical details become measurable revenue differences.

Our most practical advice: audit your site every quarter, not just when rankings drop. By then, you’re already behind. Quarterly audits catch crawl errors before they compound, identify schema that needs updating after a service change, and keep your Core Web Vitals within Google’s recommended thresholds. Mastering local SEO is an ongoing process, not a one-time fix.

We also believe deeply that businesses need partners who understand both traditional and AI SEO. The technical signals that help you rank in classic Google search and the structural requirements for appearing in AI Overviews are converging. Working with a team that sees the full picture gets you better results, faster, and keeps you from having to rebuild your strategy every time the search landscape shifts.

Take the next step: Expert technical SEO for New Jersey businesses

Your website has the potential to be your most powerful lead generation tool. But that potential only gets realized when the technical foundation is solid enough to support it.

Whether you’re just getting started or managing a complex site that’s been patched together over the years, we’re ready to help you chart a course toward stronger search performance. If you’re newer to this space, start with our SEO for beginners resource to build your knowledge base. For businesses looking to show up in AI-generated answers, our guide on how to improve your ChatGPT ranking gives you a clear playbook. And when you’re ready to put it all together, our website optimization strategies will help you turn technical improvements into real business results. Let’s build something search engines and customers can’t ignore.

Frequently asked questions

What are examples of technical SEO issues?

Common issues include slow site speed, broken internal links, poor mobile responsiveness, missing XML sitemaps, and improper schema markup. These problems prevent search engines from crawling and indexing your site correctly, which directly affects rankings.

How is technical SEO different from on-page and off-page SEO?

Technical SEO focuses on infrastructure, while on-page SEO addresses content and keywords, and off-page SEO relates to backlinks and external authority signals. All three work together, but technical SEO is the foundation the other two rely on.

Why does structured data matter for AI-driven search?

Structured data helps AI systems understand your business as an entity, not just a collection of text. Schema aids machine learning categorization, which shifts schema’s value beyond rich results into how AI surfaces your business in generated answers.

How do you check for technical SEO problems?

Use Google Search Console to identify crawl errors and indexing issues, then run a deeper audit with tools like Semrush or Ahrefs. Semrush detects 23% more issues than Ahrefs in JavaScript rendering and structured data, making it especially useful for modern, dynamic websites.

Michael Fleischner is the founder of Big Fin SEO, a New Jersey-based local SEO agency helping service-area and multi-location businesses increase visibility, generate qualified leads, and drive measurable revenue from search.

He is a TEDx speaker, Amazon-published author of The 7 Figure Freelancer, and a frequent speaker on SEO, AI-driven marketing, and personal branding.

Corine R.

Corine R. Laura A.

Laura A. Michael F.

Michael F.